|

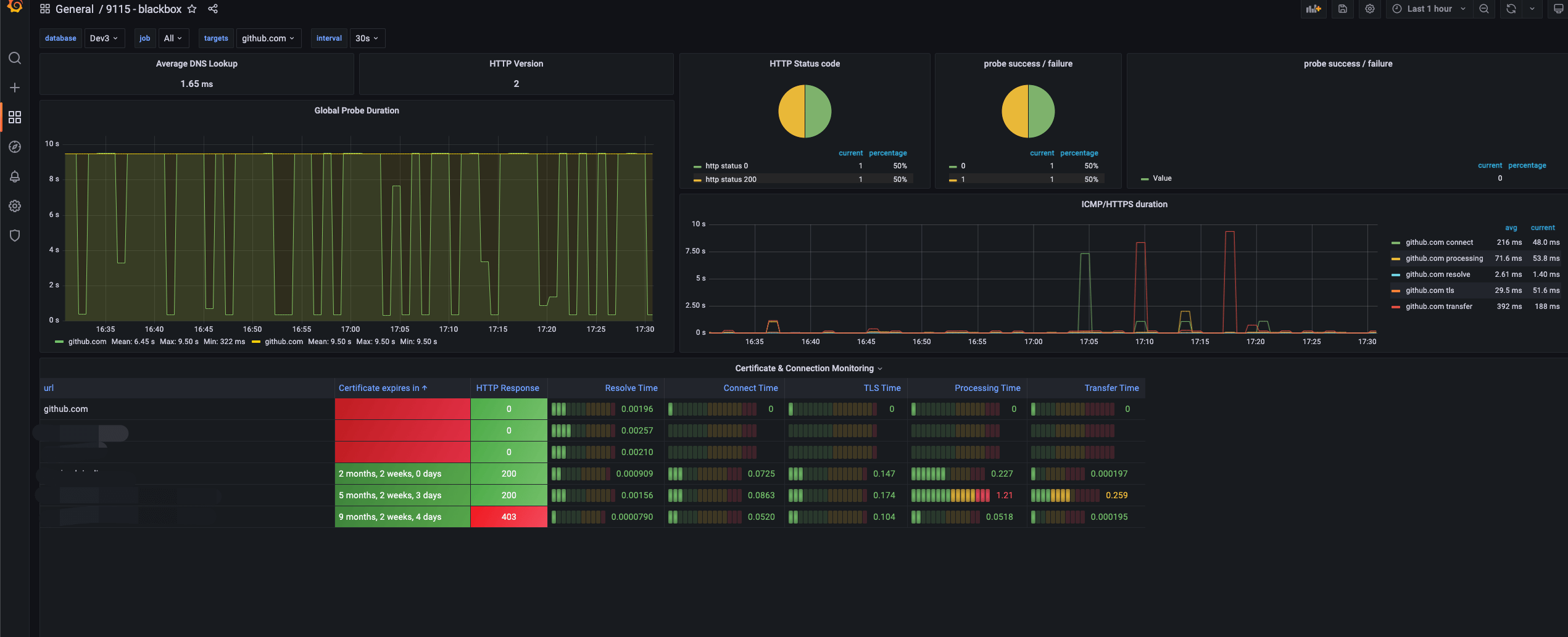

7/26/2023 0 Comments Blackbox grafana

It's incredibly simple to add a dashboard to Grafana. You can see many other dasboards for grafana/prometheus here. (for viewing blackbox http endpoint statuses).There's many (many) dashboards for different systems and many for prometheus and it's many exporters (like node_exporter, blackbox, etc.).Īt I've used these dashboard (which I've then customised further to suit my needs): One of the great things with Grafana is that it is extremely easy to use (and publish) any dashboards that others have created. Prom:9090 refers to the docker-compose defined hostname for our prometheus container. We'll download, untar, and then move it to /opt/node_exporter At the time of this writing the latest stable version for linux-amd64 was node_exporter-0.18.1. You can find a link for the latest version at. We're going to be doing an extra step here to manage node_exporter with systemd (so it starts on server boot etc.). Installing node_exporter can be done by downloading a recent version, untar'ing and executing. Node_exporter can be run from a docker container, but it's not recommended since it should be run directly on the host hardware to collect stats. See here for a list of collectors enabled by default (and what info they collect). Installing and configuring node_exporter (to monitor server stats)īy default node_exporter enables a large number of "collectors" (modules which collect certain information from the machine). See Apache reverse-proxy SSL to multiple server applications for more information on how to implement this. This is an external address for which I'm using a reverse proxy to secure (SSL) and route traffic to the internal 4000 port of Grafana.

You'll note that I've defined my domain as. Replacement: bbexp:9115 # The blackbox exporter's real hostname:port.

# The job name is added as a label `job=` to any timeseries scraped from this config. # A scrape configuration containing exactly one endpoint to scrape: # Load rules once and periodically evaluate them according to the global 'evaluation_interval'. #scrape_timeout: 10s # Set scrape timeout period. #evaluation_interval: 1m # Set interval to evaluate rules. #scrape_interval: 1m # Set the interval to scrape data.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed